Kubernetes Architecture: The Modular Core

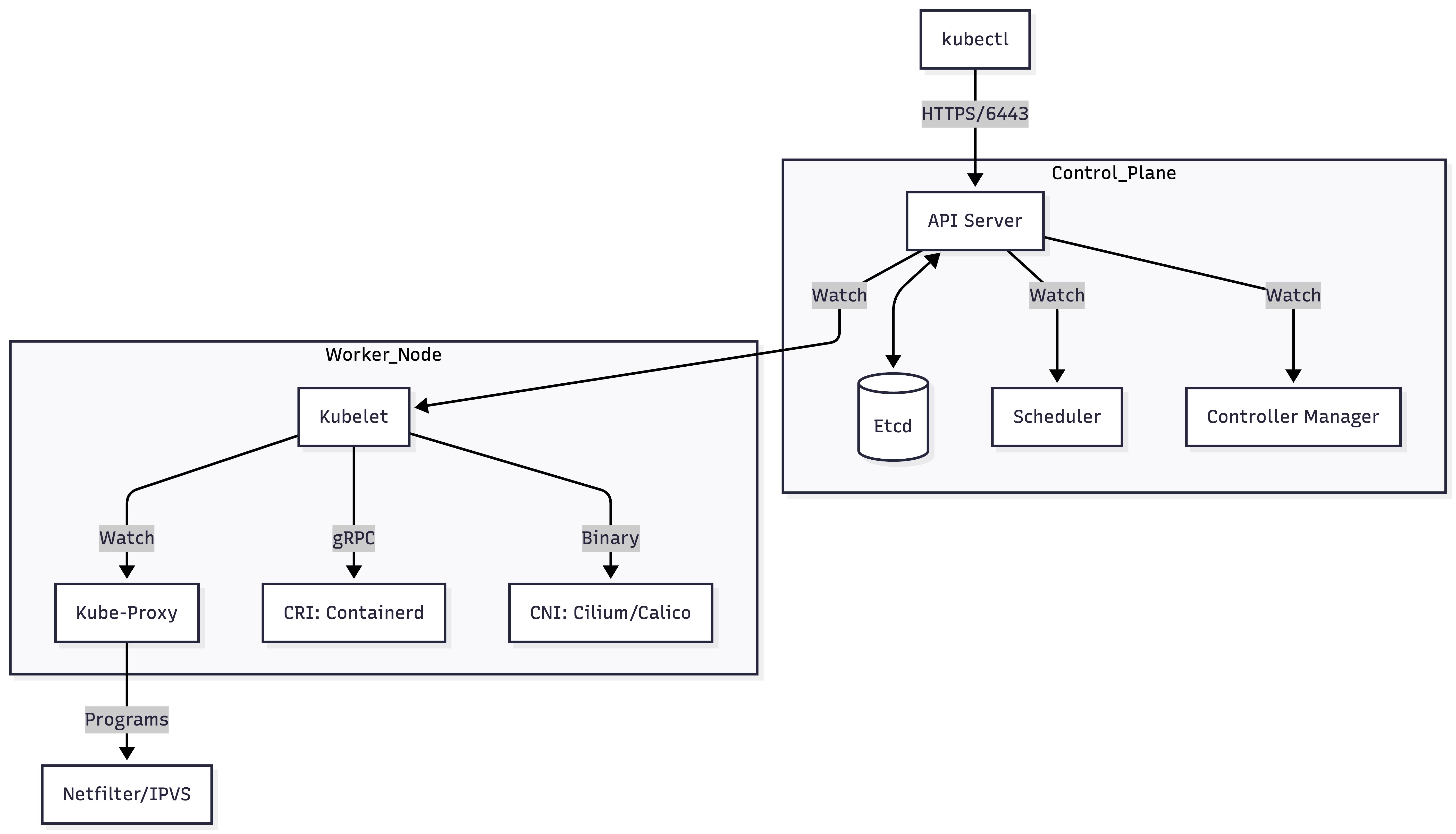

Kubernetes is a distributed system designed as a collection of decoupled control loops. It follows a "Level-Triggered" design philosophy where components constantly reconcile the Current State with the Desired State stored in a central, immutable data store.

1. CLUSTER TOPOLOGY & HA

A production-grade cluster segregates responsibilities into two distinct planes:

- The Control Plane (The Brain): Manages the cluster state, scheduling, and API entry points.

- The Data/Compute Plane (The Muscle): Executes the actual containerized workloads.

High Availability (HA) & Quorum

In production, the Control Plane must be redundant.

- API Server: Stateless; can be scaled horizontally behind a Load Balancer.

- Scheduler & Controller Manager: Only one "Leader" is active at a time (via Leader Election in the API).

- Etcd: Uses the Raft Consensus Algorithm. It requires a Quorum ($floor(n/2) + 1$) to commit writes.

- 3 Nodes: Tolerate 1 failure.

- 5 Nodes: Tolerate 2 failures.

- Architect Note: Always use an odd number of members to avoid "Split-Brain" scenarios.

2. DEFAULT PORTS & FIREWALL REFERENCE

| Component | Port | Protocol | Logic / Responsibility |

|---|---|---|---|

| kube-apiserver | 6443 | HTTPS | Entry point for all users and components. |

| etcd | 2379 / 2380 | HTTPS | 2379: Client API; 2380: Peer-to-Peer (Raft). |

| kubelet | 10250 | HTTPS | Control plane to node communication (logs, exec). |

| kube-scheduler | 10259 | HTTPS | Secure metrics/health check. |

| kube-controller | 10257 | HTTPS | Secure metrics/health check. |

| NodePort Range | 30000-32767 | TCP/UDP | External traffic entry via every Node IP. |

3. CONTROL PLANE INTERNALS

3.1 Kube-API Server (The Hub)

The API Server is the only component that communicates with etcd. It is a RESTful gateway with a strictly defined request lifecycle:

- Authentication (AuthN): Validates "Who are you?" (X509 Certs, OIDC, Tokens).

- Authorization (AuthZ): Validates "What can you do?" (RBAC).

- Admission Control:

- Mutating: Modifies the request (e.g., injecting a Sidecar).

- Validating: Final check (e.g., checking ResourceQuotas).

- Persistence: Writes the JSON object to

etcd.

The "Watch" Mechanism: Instead of polling, components (Kubelet, Scheduler) establish a long-lived HTTP/2 stream called a Watch. When etcd updates, the API Server pushes the event to the watcher.

3.2 Etcd (The State Store)

- Consistency: Implements MVCC (Multi-Version Concurrency Control). Every change increments a

resourceVersion. - MVCC: Old versions are kept until Compaction.

- Backup Command:

ETCDCTL_API=3 etcdctl snapshot save /tmp/snapshot.db \

--cacert=/etc/kubernetes/pki/etcd/ca.crt \

--cert=/etc/kubernetes/pki/etcd/server.crt \

--key=/etc/kubernetes/pki/etcd/server.key

3.3 Kube-Scheduler (The Placement Engine)

The scheduler performs a two-phase cycle to move a Pod from Pending to Scheduled by setting the spec.nodeName:

- Filtering (Predicates): Removes nodes that lack resources, have taints, or fail affinity rules.

- Scoring (Priorities): Ranks remaining nodes. Examples:

ImageLocality(does the node already have the image?) andNodeResourcesLeastAllocated(spread strategy).

3.4 Kube-Controller Manager (The Enforcer)

Contains multiple controllers (Node, Deployment, EndpointSlice). Each is a loop:

for {

desired := getDesiredState()

current := getCurrentState()

if desired != current {

reconcile(desired, current)

}

}

4. COMPUTE PLANE INTERNALS

4.1 Kubelet (The Node Commander)

Kubelet is the "manager" of the node. It does not run in a container; it runs as a systemd service.

- PodSpec Processing: It receives PodSpecs and ensures the containers are running and healthy.

- PLEG (Pod Lifecycle Event Generator): Relays container state changes back to the API.

- Static Pods: Kubelet watches

/etc/kubernetes/manifestsand runs any YAML found there (used for bootstrapping the control plane).

4.2 Kube-Proxy (The Network Orchestrator)

Programs the host's networking stack to handle Service IPs.

- iptables Mode (Legacy): Uses netfilter rules. Scalability: $O(n)$ where $n$ is the number of services.

- IPVS Mode (Production): Uses hash tables. Scalability: $O(1)$. Supports advanced LB algorithms like

Least Connection.

5. MODULAR INTERFACES: CRI, CNI, CSI

Kubernetes abstracts hardware/runtime logic into three gRPC-based interfaces.

5.1 CRI (Container Runtime Interface)

Allows Kubelet to communicate with runtimes (containerd, CRI-O).

- Socket Path:

/run/containerd/containerd.sock - Workflow: Kubelet sends a gRPC request to CRI -> CRI pulls image -> CRI talks to

runcto create Linux namespaces/cgroups.

5.2 CNI (Container Network Interface)

Handles Pod networking.

- Workflow: Kubelet calls CNI plugin (Calico, Flannel, Cilium) -> CNI creates a veth-pair -> CNI joins Pod to the network bridge/mesh -> CNI assigns an IP.

- Standard: Every Pod in a cluster must be able to communicate with every other Pod without NAT.

5.3 CSI (Container Storage Interface)

Decouples storage vendors from the Kubernetes core.

- Mechanism: Uses a "Sidecar" pattern.

- External-Provisioner: Watches for PVCs, calls Cloud API to create a disk.

- External-Attacher: Attaches the disk to the Node.

- Node-Driver: Kubelet calls this to mount the disk into the Pod's filesystem.

6. VISUAL: THE DATA FLOW

7. ARCHITECT'S VERIFICATION CHECKLIST

| Objective | Command |

|---|---|

| Check API Health | kubectl get --raw='/healthz' |

| Verify Component Health | kubectl get componentstatuses (Note: Deprecated, use events/logs) |

| Inspect CRI Runtimes | crictl pods and crictl ps |

| Audit API Groups | kubectl api-resources |

| Trace Scheduling | `kubectl describe pod |

| Check Node Network | ipvsadm -Ln (If using IPVS mode) |

Pro-Tip: Debugging "PLEG is not healthy"

This error means the Kubelet is unable to track container states fast enough.

- Check CPU/Memory of the Node.

- Check the Container Runtime logs:

journalctl -u containerd -f. - Verify the CRI socket is responsive:

crictl info.